Monday, October 27, 2008

FireSpider extension for Firebug

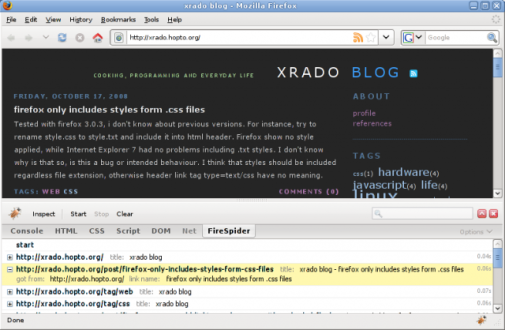

Extension enables you start and the stop the spider. After pressing the start button it fetch the current url and parses the content for new urls. Every unique url is fetch only once. It follows only the current domain urls and detects its content type. Not html content types are reported. Besides fetching, firebug panel gives you information about page title, page where url was got from, its link name and loading time. Reported are also not found urls and ones went in time out after 10 seconds. Requests are send one after another, so some sites may find it to aggressive or may detect you as a dangerous script. Be careful.

This is the first version (hope not the last) and first extension i ever made. Some things may not be very well done, but the basic functionalities are working, i think. Try it and tell me what you think. Hope you find it useful.

firespider-0.1.xpi ( download / install )

FireSpider on GitHub